Machine learning is no longer confined to static datasets. Many modern problems — from energy forecasting and fraud detection to sensor analytics and cybersecurity — involve continuous streams of data. These streams arrive instance by instance, evolve over time, and often undergo concept drift, making traditional batch learning unsuitable.

This post is for ML practitioners and applied researchers working with evolving data streams who need high-throughput, adaptive learning – and want MOA-level performance in Python without sacrificing usability.

It is the final article in our Stream Mining series and builds on the foundations introduced in the earlier posts “Learning From Data Streams: Foundations of Stream Learning”, “Green Online Learning with Heterogeneous Ensembles” and “Streaming Gradient Boosting: Pushing Online Learning Beyond its Limits”, focusing on how these concepts come together in practice.

To address these challenges and many more, CapyMOA was developed. CapyMOA is an open-source Python library for stream learning and online continual learning (OCL). First released publicly in May 2024, CapyMOA aims to bring together the efficiency of MOA, the accessibility of Python, and the flexibility demanded by modern streaming applications.

MOA was the first widely adopted open-source library for data stream learning. Originally implemented in Java around 2007, it continues to be actively used and maintained.

Why CapyMOA?

Existing tools strike different compromises:

- MOA (Java) is extremely efficient but less accessible to Python-centred workflows.

- River is user-friendly but written entirely in Python and struggles with high-throughput streams.

- Scikit-Multiflow bridged some gaps but is no longer actively developed.

For practitioners, this often leads to a trade-off between performance, usability, and long-term maintainability. CapyMOA bridges these worlds by focusing on three core pillars:

1. Efficiency

CapyMOA combines native Python implementations with access to MOA’s optimized Java algorithms through a modern Python API. Whenever suitable, it leverages MOA’s mature implementations via JPype with minimal overhead, while also providing its own growing ecosystem of stream learning methods. This gives users MOA-level performance where available, without writing a single line of Java.

Why this matters in practice:

Efficiency is critical in data stream learning, where models must process continuously arriving data under time and resource constraints. Faster implementations allow researchers and practitioners to work with longer and more realistic streams, run a larger number of experiments, and reduce computational costs. This makes large-scale evaluation and deployment more feasible, especially in resource-constrained environments. CapyMOA delivers this efficiency while remaining fully integrated within Python-based workflows.

2. Interoperability

CapyMOA integrates seamlessly with:

- MOA algorithms (wrapped automatically)

- scikit-learn models

- PyTorch components

This allows hybrid approaches that combine traditional online models with deep learning — especially useful for Online Continual Learning. This interoperability is key for applied ML teams that already rely on scikit-learn or PyTorch and want to extend existing pipelines to streaming scenarios instead of rebuilding everything from scratch.

3. Accessibility

The API is designed to be intuitive for general users while maintaining the flexibility required by advanced users, such as researchers and developers. This is reflected in features such as:

- Simple, high-level evaluation functions

- Built-in stream generators and benchmark datasets

- Drift-related functionality, including simulation, detection, and evaluation

- Automatic handling of data schemas and instances

- Visualisation tools for monitoring metrics

- A modular Pipelines API for composing streaming workflows

- An end-to-end framework for online continual learning (OCL), including experiment configuration, evaluation, and algorithms

Accessibility here does not mean oversimplification. It means reducing boilerplate for straightforward tasks—like rapidly benchmarking drift detectors—while retaining full control for advanced workflows, such as constructing pipelines with online preprocessing, multiple drift detectors, and custom metrics.

Learning from Data Streams with CapyMOA

Most stream-learning pipelines follow the test-then-train loop:

- Fetch the next instance

- Make a prediction

- Train the learner

- Update metrics

CapyMOA abstracts this into prequential evaluation functions, reducing boilerplate and standardising evaluation across algorithms. This abstraction ensures consistent evaluation across models and experiments.

Example: Hoeffding Tree on the Electricity Dataset

from capymoa.datasets import Electricity

from capymoa.classifier import HoeffdingTree

from capymoa.evaluation import prequential_evaluation

stream = Electricity()

ht = HoeffdingTree(schema=stream.get_schema(), grace_period=50)

results = prequential_evaluation(stream=stream, learner=ht, window_size=4500)

print(results.cumulative.accuracy())

Windowed metrics are automatically stored in a Pandas DataFrame and can be visualised with:

from capymoa.evaluation.visualization import plot_windowed_results

plot_windowed_results(results, metric="accuracy")

Example: Online Bagging on the Electricity Dataset

Of course, it is still possible to control the loop and evaluate explicitly as in the example below.

from capymoa.datasets import Electricity

from capymoa.evaluation import ClassificationEvaluator

from capymoa.classifier import OnlineBagging

elec_stream = Electricity()

ob_learner = OnlineBagging(schema=elec_stream.get_schema(), ensemble_size=5)

ob_evaluator = ClassificationEvaluator(schema=elec_stream.get_schema())

for instance in elec_stream:

prediction = ob_learner.predict(instance)

ob_learner.train(instance)

ob_evaluator.update(instance.y_index, prediction)

print(ob_evaluator.accuracy())This flexibility allows users to trade abstraction for fine-grained control whenever required. For more, have a look in the CapyMOA notebook.

Schemas and Instances: Data Consistency by Design

Unlike frameworks that rely on Python dictionaries, CapyMOA enforces a Schema for every stream. This avoids silent errors, ensures stable long-term experiments, and maintains compatibility across Python and Java components. Whether you load a CSV or ARFF file, or rely on synthetic generators, every instance carries structured metadata, making interoperability reliable and automatic across the entire pipeline.

In long-running stream experiments, small inconsistencies can silently invalidate results. For example, in a dictionary-based instance representation, the absence of a feature can be ambiguous: it may indicate a missing value, or that the feature no longer exists. With explicit Schema tracking, this distinction becomes clear — the system can detect whether the feature set itself has changed or whether a value is simply missing. This allows the learner (or other components in the pipeline) to adapt appropriately to schema evolution. Schema enforcement therefore makes such changes explicit and reproducible, which is a critical advantage in both research and production settings.

Concept Drift: A First-Class Citizen

CapyMOA provides a complete system for working with drift:

- Drift simulation using DriftStream, AbruptDrift, and GradualDrift

- Integration with evaluation and visualisation tools

- Native support for classic and modern drift detectors

Example: building a stream with one abrupt and one gradual drift:

from capymoa.stream.drift import DriftStream, AbruptDrift, GradualDrift

from capymoa.stream.generator import SEA

from capymoa.evaluation.visualization import plot_windowed_results

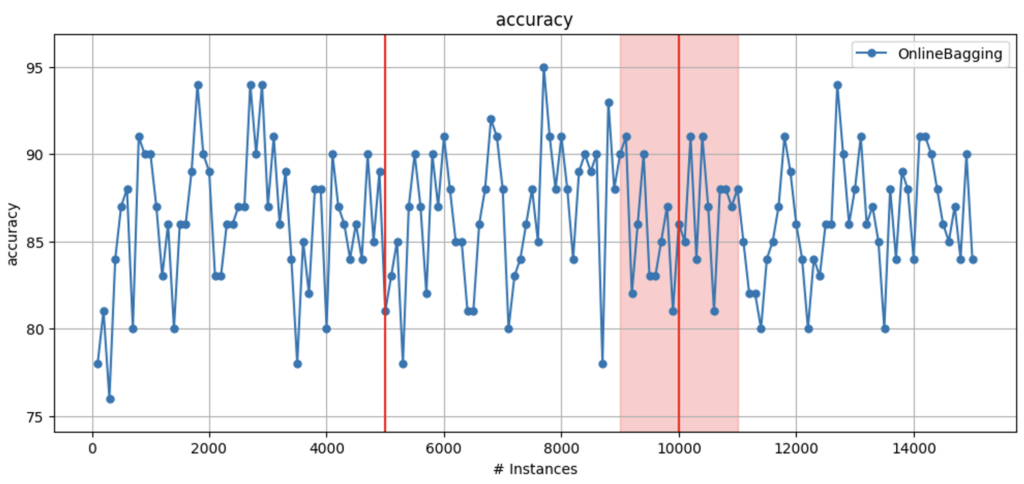

stream_sea2drift = DriftStream(

stream=[

SEA(function=1),

AbruptDrift(position=5000),

SEA(function=3),

GradualDrift(position=10000, width=2000),

# GradualDrift(start=9000, end=12000),

SEA(function=1),

]

)

OB = OnlineBagging(schema=stream_sea2drift.get_schema(), ensemble_size=10)

results_sea2drift_OB = prequential_evaluation(

stream=stream_sea2drift, learner=OB, window_size=100, max_instances=15000

)

print(

f"The definition of the DriftStream is accessible through the object:\n {stream_sea2drift}"

)

plot_windowed_results(results_sea2drift_OB, metric="accuracy")Plots automatically show the drift positions, helping researchers diagnose when and why models struggle. This makes drift not just detectable, but interpretable — a crucial step toward trustworthy adaptive systems.

For more, have a look in the CapyMOA notebook.

Pipelines: Modular, Flexible, and Ready for AutoML

Streaming pipelines are notoriously tricky because transformations must maintain state across time. CapyMOA introduces a flexible pipeline system that supports:

- Feature scalers

- Drift detectors

- Incremental learners

- Custom components via PipelineElement

- Multi-detector pipelines (e.g., ABCD + ADWIN)

This design enables experimentation with complex adaptive pipelines while preserving correctness over time. It also lays the groundwork for AutoML for data streams, dynamically selecting and tuning pipelines as the data changes.

Example: Applying normalization and adding noise

from capymoa.stream.preprocessing import MOATransformer

from capymoa.stream.preprocessing import ClassifierPipeline

from moa.streams.filters import AddNoiseFilter, NormalisationFilter

elec_stream = Electricity()

# Creating the transformers

normalisation_transformer = MOATransformer(

schema=elec_stream.get_schema(), moa_filter=NormalisationFilter()

)

add_noise_transformer = MOATransformer(

schema=normalisation_transformer.get_schema(), moa_filter=AddNoiseFilter()

)

# Creating a learner

ob_learner = OnlineBagging(schema=add_noise_transformer.get_schema(), ensemble_size=5)

# Creating and populating the pipeline

pipeline = (

ClassifierPipeline()

.add_transformer(normalisation_transformer)

.add_transformer(add_noise_transformer)

.add_classifier(ob_learner)

)

# Creating the evaluator

ob_evaluator = ClassificationEvaluator(schema=elec_stream.get_schema())

while elec_stream.has_more_instances():

instance = elec_stream.next_instance()

prediction = pipeline.predict(instance)

ob_evaluator.update(instance.y_index, prediction)

pipeline.train(instance)

ob_evaluator.accuracy()For more, have a look in the CapyMOA notebook here and here.

Online Continual Learning (OCL): Beyond Classic Stream Learning

Traditional stream learning handles concept drift but usually assumes supervised labels arrive promptly. OCL, however, tackles:

- Incremental tasks

- Domain shifts

- Sparse or delayed labels

- Memory constraints

CapyMOA integrates OCL natively, allowing both classic online models and neural-based continual learners to operate on the same evaluation framework.

This unified treatment of stream learning and continual learning is still rare and positions CapyMOA at the intersection of two rapidly growing research areas – bridging stream learning and online continual learning as one of the first fully integrated frameworks.

Example: executing Experience Replay

from capymoa.ann import Perceptron

from capymoa.classifier import Finetune

from capymoa.ocl.strategy import ExperienceReplay

from capymoa.ocl.datasets import TinySplitMNIST

from capymoa.ocl.evaluation import ocl_train_eval_loop

import torch

_ = torch.manual_seed(0)

scenario = TinySplitMNIST()

model = Perceptron(scenario.schema)

learner = ExperienceReplay(Finetune(scenario.schema, model))

results = ocl_train_eval_loop(

learner,

scenario.train_loaders(32),

scenario.test_loaders(32),

)

print(f"{results.accuracy_final*100:.1f}%")For more, have a look in the CapyMOA notebook.

Conclusion

CapyMOA aims to be the modern foundation for research and practice in adaptive machine learning. By balancing efficiency, interoperability, and usability, it provides a platform where:

- Researchers can build and test new algorithms quickly.

- Practitioners can use robust, high-performance tools for real-time data.

- Students can learn stream learning concepts through clear, simple examples.

As CapyMOA evolves, upcoming work includes deeper OCL integration, improved drift diagnostics, enhanced deep learning pipelines, and expanded support for clustering and anomaly detection.

CapyMOA is open-source and welcomes contributions:

Website: https://capymoa.org

GitHub: https://github.com/adaptive-machine-learning/CapyMOA

Tutorials: https://capymoa.org/tutorials.html

Discord: https://discord.gg/spd2gQJGAb