Building on Dr. Sebastian Buschjäger’s blog post “Learning From Data Streams: Foundations of Stream Learning”, this second article in our Stream Mining series explores how heterogeneous online ensembles like HEROS enable accurate, resource-efficient learning under concept drift.

Why One Model Isn’t Always Enough

Relying on a single machine learning model for prediction isn’t always the most reliable approach. Multiple opinions can improve decision-making and often lead to more accurate and trustworthy results. This approach is referred to as ensemble learning.

The core idea of ensemble learning is a technique that aggregates the outputs of two or more models to produce a single, improved prediction. There are many ways to combine the results of individual models within an ensemble. Some of the simplest approaches include averaging their predictions or selecting the most confident model for an input. However, while ensembles can boost performance, they come with a cost.

Especially in stream learning and real-time scenarios, the processing time plays a crucial role. So before we dive into reducing costs, let’s first look at how we can boost diversity within the ensemble.

Embracing Heterogeneity in Ensembles

An ensemble becomes particularly powerful when its members are diverse. Diversity (or heterogeneity) ensures that models make different types of errors, which can then be averaged out when combined and improving overall robustness.

Yet, configuring hyperparameters for models operating on continuously changing, unseen data remains a major challenge. While adaptive models can handle concept drift, their hyperparameters often still need manual tuning. To overcome this, Heterogeneous Online Ensembles (HEROS) introduces a novel approach: it chooses a subset of models from a pool of models initialized with diverse hyperparameter choices under resource constraints to train. This built-in diversity removes the need for manual hyperparameter tuning.

The HEROS Framework

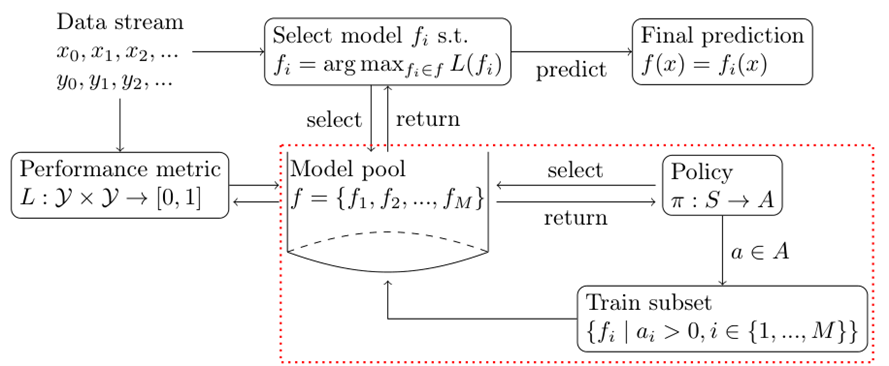

HEROS is a framework designed to train an ensemble of models on streaming data while operating under resource constraints. In this setting, a policy determines which ensemble member should be updated (i.e., trained) with each new incoming instance from the stream. As illustrated in Figure 2, the schematic overview highlights this process, with the red dotted area indicating the component we focus on.

Let $L:Y×Y→[0,1]$ denote a normalized performance metric that we aim to maximize. For each incoming instance, the framework selects the model $f_i$ with the highest predictive performance to produce the final prediction. Meanwhile, to ensure all models in the ensemble (denoted as the pool $f=f_1, f_2, … f_m)$ remain up to date, the policy $\pi$ decides dynamically which $k$ models should be trained on each instance.

But how do we decide which models in the ensemble should be trained?

An easy starting point is to select the $k$ individual models with

- Perform-best, where models $f_i$ are chosen based on their performance metric L(f_i)

- Perform-worse strategy, focusing on models with lower current performance L(f_i) , to give them a chance to adapt and improve

- Cheapest, resource consumption,

- Expensive, resource cost, or

- CAND, proposed by Gunasekara et al., combines these ideas by selecting half of the models based on their best performance and the other half at random.

Since computational resources, such as processing power and energy, are costly and we aim to minimize resource usage while maintaining strong predictive performance. However, as data drifts and the underlying distribution evolves, not every model remains suitable for the current concept. Ideally, we want to prioritize training for ensemble members that:

- Currently show strong predictive performance, and

- Require fewer resources to update.

Balancing these two objects creates a multi-objective optimization problem, which is computationally expensive to solve exactly. To manage this complexity, HEROS introduces the $\zeta$-policy.

Green Learning by Saving Resources

Rather than focusing solely on maximizing predictive performance, HEROS employs a simple yet effective policy called $\zeta$-policy. The policy iteratively selects $k$ models based on:

- Low resource cost $Y_i$, and

- Acceptable predictive performance, i.e., the model’s performance must not be worse than $1−\zeta$ times the best not-yet-selected model.

For example in Figure 2 $f_i$ has the highest predictive performance, but $f_3$ performs within $(1-\zeta) L(f_1)$ and requires fewer resources to train. In that case, the policy favors $f_3$ over $f_1$ .

To explore the remaining state space, however, a random combination of $k$ models is selected using the $\epsilon$-greedy strategy with a probability of $\epsilon$.

So far, so good, but maybe you were wondering: how do we measure resources during computation? Let’s go through some options:

- Pre-defined and fixed resource costs: e.g., the number of hidden nodes and layers in a neural network, or the maximum tree depth of a Hoeffding tree,

- Training time: Measure the average time (of the past instances, stored in a window) of training a single instance

- Memory consumption: Similar to the training time.

Drift Reaction

As data distribution evolves, models that once performed well may lose effectiveness. HEROS updates the estimated predictive performance of each model continuously, allowing the policies to respond quickly to concept drift.

This adaptability is visible in the evaluation results:

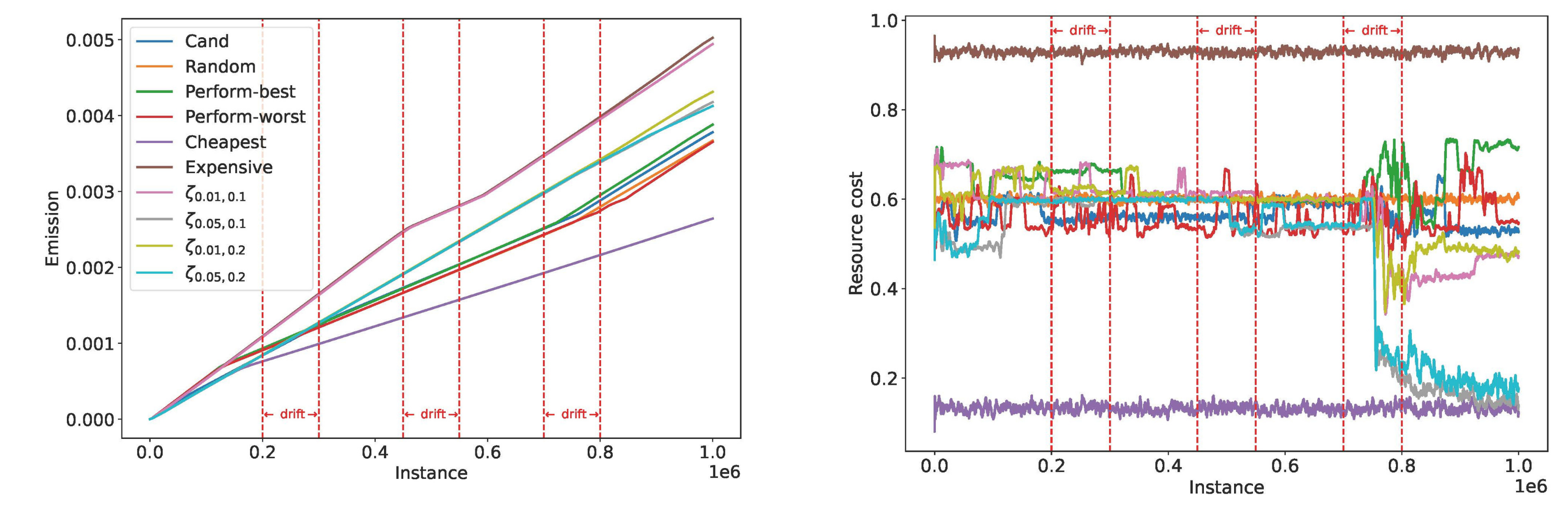

In Figure 3(a), the reading behind the policy names becomes clear: Cheapest and Expensive reflect the range of resource costs, while short-term dips in the emissions of CO2 around the drifts indicate temporary adjustments. In Figure 3(b), the resource consumption under the $\zeta$ -policy (pink, grey, green, and blue lines) shifts following a gradual drift. After the drift, a new subset of models is selected for training as others begin to perform better on the new concept.

Across 11 benchmark datasets (more details in the paper), HEROS consistently advances the state of the art, and in several cases, outperforms leading online ensembles such as Adaptive Random Forest, Streaming Random Patches, and Shrub ensembles.

Let’s have a quick look at the theoretical foundations.

Some Theory

The asymptotic behavior of different policies has been analyzed using a stochastic model (more details in the paper). Given a pool of size $M$ , from which $k$ models are selected according to a policy, we established the following theorems:

- The average performance (with probability $1-\epsilon$ ) achieved by applying the $\zeta$ -policy is higher than that achieved by applying CAND policy as $M \to ∞$ $M \to ∞$ and then $k \to ∞$.

- While the average resource consumption (with probability $1- \epsilon$ ) achieved by applying the $\zeta$-policy is lower than that achieved by applying CAND policy as $M \to ∞$ and then $k \to ∞$.

Key insight: When models are selected non-randomly (exploitation with probability $\epsilon \ll 1$), the $\zeta$-policy achieves higher average predictive performance for small $\zeta$, while the average resource costs are lower compared to CAND. Moreover, in the paper, it is also proven that the resource costs are asymptotically lower under $\zeta$-policy than under perform-best while leveraging models with at most $\zeta$ worse predictive performance.

Wrapping up

When little is known about the current data stream or when hyperparameters cannot be pre-tuned, HEROS offers an ideal solution. To wrap up, the key advantages of HEROS are:

- No hyperparameter tuning, thanks to its heterogeneous design

- Efficient resource usage during training

- Fast adaptation to concept drift

- Flexible policy integration

- … and much more!

You can explore HEROS in action in the latest release of the open-source MOA library!

Literature

- Kirsten Köbschall, Sebastian Buschjäger, Raphael Fischer, Lisa Hartung, and Stefan Kramer. Lift What You Can: Green Online Learning with Heterogeneous Ensembles. 2025. arXiv: 2509.18962

- Nuwan Gunasekara, Heitor Murilo Gomes, Bernhard Pfahringer, and Albert Bifet. 2022. Online Hyperparameter Optimization for Streaming Neural Networks. In 2022 International Joint Conference on Neural Networks (IJCNN). 1–9.