Incomplete data is an recurring problem for Machine Learning. However, with the help of a probability-based approach, the gaps in such datasets can be filled, making them usable for further processing.

Data is indispensable in the context of Machine Learning. In many domains, datasets may be incomplete due to errors in measurement or processing. This is evident, for example, in the UCI Machine Learning Repository, where two of the five most popular datasets contain missing entries (“Adult” and “Heart Disease”). Learning models from data with gaps can be difficult and, in some cases, even impossible. However, generative approaches can help by filling in incomplete data with meaningful approximations.

Probability-based modeling

The entries of any dataset can be interpreted as concrete realizations of a random vector. Due to incompleteness, individual components of the vector are unobserved. Depending on the dataset, different components may be missing for each entry. When modeling the random vector, it is generally necessary to consider that individual components may be statistically dependent on each other. Such dependencies can be well represented by probabilistic graphical models, such as Markov Random Fields (MRFs).

In MRFs, dependencies are described by an undirected graph. It is assumed that realizations of the random vector come from a discrete state space, so some datasets may need to be discretized initially. MRFs allow the calculation of the multivariate probability distribution of the random vector. Marginalization also allows the calculation of the probability for any conditional partial assignments. In the context of an incomplete dataset, the meaningfulness of different predictions for missing entries can be examined. However, in training, the parameters of the MRF must be determined based on available data. Traditionally, this is done through Maximum Likelihood Estimation, which requires fully observed data.

Parameter estimation with incomplete data

Fortunately, training an MRF is also possible with incomplete data. This involves performing Maximum Likelihood Estimation using an Expectation-Maximization scheme. Essentially, the missing parts of the dataset are alternately filled using sampling, and then the parameters are re-estimated.

In the case of extremely large gaps in the dataset, the randomness of the first expectation step (random sampling from the state space) can overshadow the estimation of the initial parameters. To counter this, statistical knowledge can be derived from the data and incorporated into parameter estimation in the form of regularization.

Data reconstruction

The question now arises: how can the trained MRF fill in the gaps in the dataset with meaningful values? The MRF allows us to calculate the probability function for specific assignments and thus compare the quality of different predictions.

It is also possible to calculate the most probable assignment, known as the Maximum A Posteriori (MAP) state. In the case of incomplete data, the MAP state is conditioned by the partially available observations. Gaps in the dataset can be filled by replacing all missing entries with the MAP prediction. Alternatively, the MRF can calculate the probability of different predictions and combine them if necessary.

Application in the context of satellite data

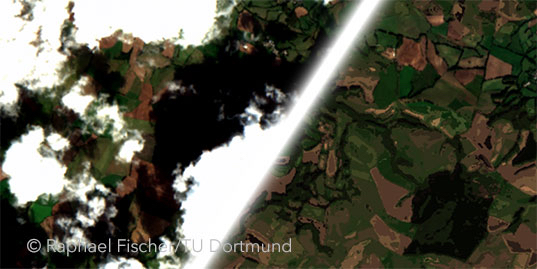

Satellite images serve as a good example of incomplete datasets. They have been a staple in research for years, for example, in the areas of climate change and land use analysis. However, large parts of the Earth’s surface captured from space are obscured by clouds. Accordingly, “Gap Filling” plays an important role in processing satellite data. In the following illustration, you can see how clouds in the images can be removed using our approach.

The satellite images of the Earth’s surface, which is partially obscured by clouds, can be reconstructed using probability-based modeling.

As shown, the presented MRFs allow us to reconstruct even heavily clouded images. For spatial-temporal satellite data, it makes sense to model only the local spatial neighborhood of individual pixels, and the finished model can be “slid” over the entire dataset. Unlike other methods, our approach does not rely on additional information, and no assumptions are made about the available data. To improve predictions in heavily clouded images, empirical knowledge can be directly derived from the data and considered during training in the form of regularization. In experiments, MRF-based predictions for cloudy parts are measurably better than reconstructions from other approaches. This is also evident in the visual comparison.

More information in the associated paper:

No Cloud on the Horizon: Probabilistic Gap Filling in Satellite Image Series

Raphael Fischer, Nico Piatkowski, Charlotte Pelletier, Geoffrey Webb, François Petitjean, Katharina Morik. IEEE International Conference on Data Science and Advanced Analytics (DSAA), 2020, PDF.