The bin packing problem is one of the hardest problems for digital computers known in computer science. It goes as follows:

Given a set of objects of a certain size and a set of containers of a certain volume. Is it possible to fit all the given objects into the containers?

Although its name and the example we will discuss in this article suggest applications mainly in logistics, bin packing can also be used to express other problems like routing or process coordination. In this Blog post, we will present a concrete example for bin packing and apply two naive solution strategies. We discuss under what practical considerations one approach might be preferable over the other.

Bin packing

Bin packing is a combinatorial optimization problem. This class of problems is characterized by asking for a particular combination or order of problem specific entities, such that the combination fulfills a desired criterion: For bin packing, this is the optimal arrangement of different objects with known attributes into a given set of containers. In practice, one is not only interested in the yes-no-answer of whether or not all objects can fit into the given containers. One rather wants to know exactly how the objects have to be placed, i.e. the specific configuration, in order to fulfill the criterion. From an economic perspective it is reasonable to not only look for a solution that simply arranges the objects in an arbitrary order, but for one that uses as few containers as possible. On the other hand, time might be a limiting factor and one might be willing to compromise packing density for a faster packing pace.

The crux of combinatorial optimization problems is that one does not know in advance whether or not a configuration with the desired properties exists for the given set of objects. Neither does one know what the next best reachable configuration is. To be certain that one has found the optimal reachable configuration, there is no other way than to calculate and compare all possible configurations.

The set of all possible combinations grows exponentially (or stronger) with the size of the set of objects that is to be considered. This enormous growth in complexity makes it impossible for digital computers to perform the calculations necessary to guarantee optimality of a solution. To circumvent this problem in practice, one has to rely on heuristics. Heuristics try to prune the searchspace, i.e. the set of all combinations that are to be considered, such that as many bad combinations as possible are excluded while as many good ones as possible remain. This is achieved by introducing clever assumptions about the concrete problem.

Bin packing in 2D

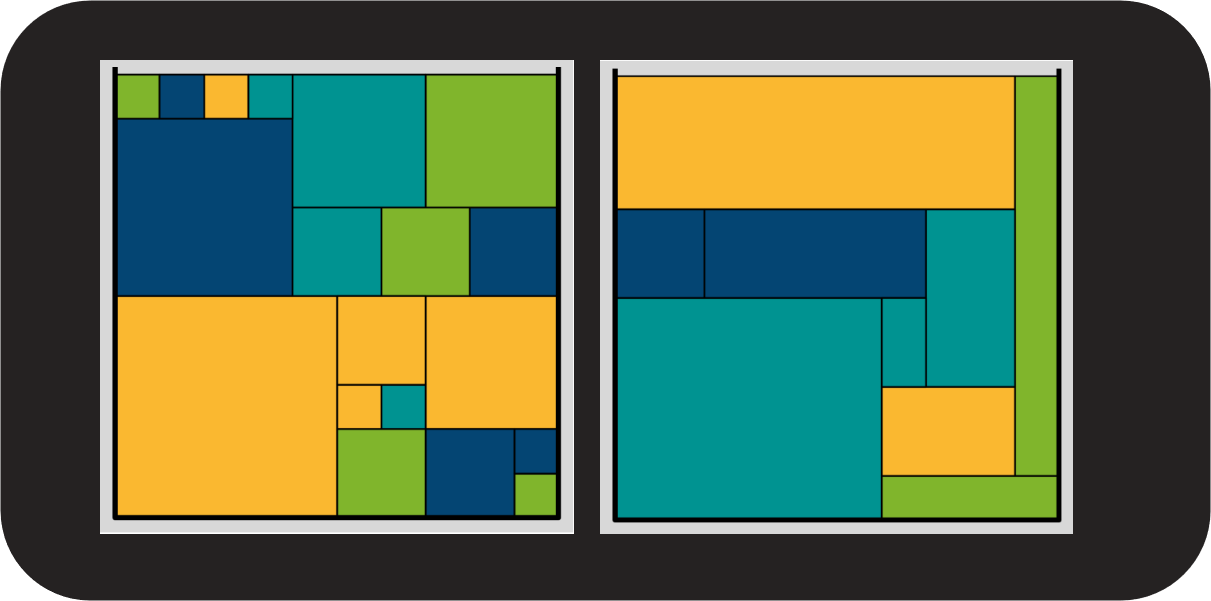

In this Blog post, we discuss a two-dimensional variant of the bin packing problem where we try to fit rectangular objects into quadratic 10×10 containers. The following rules are in place: The objects can be rotated by 90 degrees. Once an object is placed, the position is final and cannot be changed again. A configuration is valid if no two objects overlap and no object sticks out of the box.

The figure shows two containers, packed densely with colored objects. This configuration is an optimal solution. One can also see, that the optimal solution is not unique, there are other possibilities to pack the containers densely. For some context: The smallest object is 1×1 units in size, the largest 6×5 units in width and height.

Max-Rest and First-Fit Algorithms

What are possible assumptions to guide a heuristic to produce close to optimal configurations? As previously elaborated, one objective is to find dense configurations. At the same time, we want to consider the possibility of time being a constraint.

Max-Rest-Algorithm. We begin with considerations for a time-critical application: If there is a lot of capacity remaining in a container, the probability is high that one is able to fit an object in it. This assumption puts an emphasis on reducing the number of times that we unsuccessfully try to place an object into a container where no suitable opening is left. We hence save time. This leads to the Max-Rest algorithm which places objects using the following logic:

Choose the container with the highest remaining capacity. Try to place the object in it. If placement is not possible, open a new container and place the object in it.

First-Fit Algorithm. If time is less of a concern and it is more important to obtain a dense configuration, we might make the following assumption: The more often we pick a certain container and try to place an object in it, the higher is the chance to pack the container densely. This train of thought leads to the First-Fit algorithm. For this algorithm it is now important that the containers have a fixed order. The algorithm goes as follows:

Traverse the list of containers from first to last. Place the object in the first container where it fits. If it does not fit in any container, open a new container, place the object inside and append the new container to the end of the list of containers.

Placing an object. For a better comprehension of how a specific configuration is calculated by the algorithms, we also need to define exactly how an object is placed inside a certain container. This procedure is done as follows:

Compute all open positions in the container. If the total capacity remaining is smaller than the size of the object that is to be placed, abort and report an error. If there is enough capacity left, traverse all open positions and try to place the object there. Start with the position that is the furthest left and then the furthest south. Place the lower left corner of the object on that position. If the object overlaps with another object, try to rotate it clockwise by 90 degrees and check for overlap again. If the object still overlaps with another one, move on to the next position. If all open positions were considered and the object has still not been placed, report an error.

Online Scenario

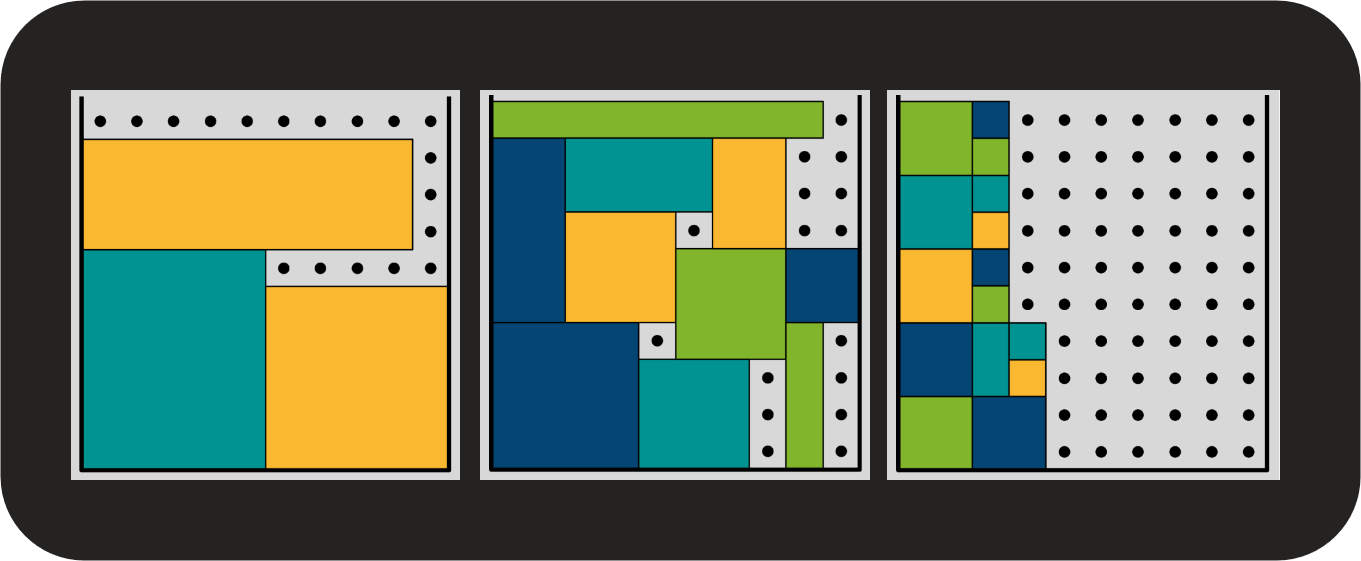

We will present the objects to the algorithms in the exact order in which we have previously retrieved them. This simulates an online scenario in which we do not make any assumptions about how many objects there are in total or what properties (here: sizes) they might have. Let us see what configurations we obtain applying the Max-Rest and First-Fit algorithms. The following visualization shows the result of Max-Rest:

Max-Rest is able to fit all objects into three containers. One can observe large, coherent areas of open positions (black dots). The containers have [27, 25, 48] open positions each. The algorithm only tried twice to place an object into a container where it did not fit (unsuccessful placement). The output of the First-Fit algorithm looks quite different:

Again, there are open spots in every container, but the first two containers are over 90% full. The last container holds only one object. From a density perspective the result of First-Fit is clearly superior to that of the Max-Rest. First-Fit made considerably more unsuccessful attempts at placement, namely 11. Both observations are to be expected. First-Fit always tries to place the object in the anterior containers. Over time, those containers fill up such that larger objects do not fit in any more but smaller ones can still be placed successfully.

Offline Scenario

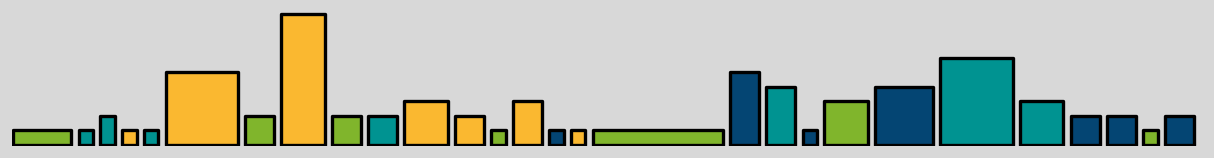

In the previous section, we made the assumption that we do not know how many or what kind of objects have to be packed. Let us now consider the offline scenario in which we know exactly how many objects we need to store and what properties they have. In this case, we can apply preprocessing. A simple preprocessing could be sorting the objects by size. If we sort them by surface area and width, we obtain the following sequence:

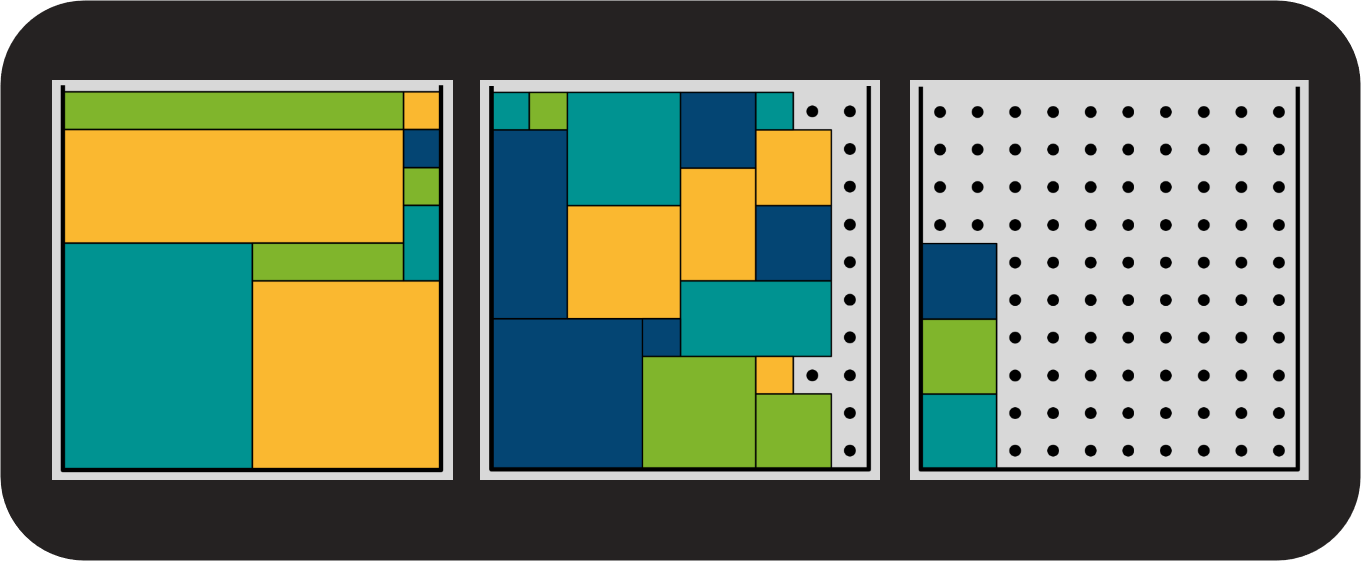

Figures 6 and 7 depict the output of the two heuristics applied to the ordered list. Note that First-Fit is also called First-Fit-Decreasing when applied to a set of objects preprocessed in the above detailed fashion.

Both algorithms again store the objects in three containers each. In the containers packed by Max-Rest the number of open positions is [18, 16, 66] for the respective containers. The load of the first two containers has increased while the load of the third has decreased significantly. Max-Rest again only made two unsuccessful attempts at placing an object.

The first container of the First-Fit-Decreasing solution looks very similar to the one of Max-Rest. But First-Fit-Decreasing succeeded in filling out every position of the first container. The remaining capacity of the containers is [0, 12, 88] respectively. The load of the second container has increased while the load of the third has decreased. The number of unsuccessful placing attempts has doubled to 22. Taking a closer look at the first container, this high number is hardly surprising. After the first three largest objects had been placed, the container was already so full that only the smallest objects could still be placed inside it. Since we had sorted the objects by size, the small ones came last and First-Fit tried to fit all medium-sized objects into the first container to no avail.

The table summarizes the statistics:

| Algorithm | Open capacity per container | Number of unsuccessful placements |

| Max-Rest | [27, 25, 48] | 2 |

| First-Fit | [3, 6, 91] | 11 |

| Max-Rest sorted | [18, 16, 66] | 2 |

| First-Fit sorted | [0, 12, 88] | 22 |

Being able to preprocess the set of objects improved packing density for both algorithms. First-Fit-Decreasing was able to fill a container completely. If time is a limiting factor, our analysis suggests to use the Max-Rest algorithm because the number of unsuccessful placement attempts was reduced.

Clever guessing

In this blog post, we learned that combinatorial optimization problems will remain intractable hurdles for digital computers. The best recommendation is to guess smartly. This does not necessarily yield the optimal solution, but we saw that simple heuristics can still lead to acceptable results. We hereby discussed a concrete bin packing problem in detail and compared the First-Fit and the Max-Rest algorithms in an online and an offline scenario. Further naive approaches would be the Next-Fit or Best-Fit algorithm. Evolutionary algorithms also provide good heuristics and have practical applications. One such application can be found in the blog post “Evolutionary Optimization of Quantum Circuits”, which deals with hardware that could potentially make combinatorial optimization more tractable.

ML2R Autumn School 2021

Combinatorial optimization is not a typical application scenario for Machine Learning methods. In this year’s ML2R Autumn School, taking place October 4th-8th, motivated Master’s and PhD students will have the opportunity to creatively think of ways to apply Machine Learning procedures to combinatorial structures. The Autumn School will revolve around a well-defined bin packing problem. Participants will work in small teams to develop their own ML-based solution. Participation is free but the number of slots is limited. Application is open until August 29th.