While medical knowledge, diagnostic technologies and treatment options continue to advance, large parts of the world still face structural limitations in access to eye care. Preventable or treatable diseases such as diabetic retinopathy and cataracts remain among the leading causes of visual impairment and blindness worldwide, with the highest burden in low- and middle-income countries. Limited availability of trained specialists, infrastructure constraints, and logistical barriers continue to restrict early diagnosis and systematic quality assurance.

Research at Lamarr (Prof. Dr. Thomas Schultz), in close collaboration with the University Hospital in Bonn (PD Dr. Maximilian Wintergerst), Sankara Eye Foundation India (Dr. Kaushik Murali), and Microsoft Research India (Mohit Jain, PhD), addresses these challenges through AI-based Ophthalmic Video Analysis. The approach combines low-cost image- and video acquisition – such as smartphone-based retinal recording – with automated video analysis based on artificial intelligence and machine learning. The objective is to make existing diagnostic and surgical data more accessible and interpretable, particularly in settings where conventional infrastructure is limited.

AI-based Ophthalmic Video Analysis for Diabetic Retinopathy Screening –Increasing Access to Eye Care

One way in which AI-based Ophthalmic Video Analysis can improve healthcare in the Global South is by expanding access to routine eye screenings. In many regions, screening opportunities remain limited due to shortages of trained specialists, long travel distances, and high examination costs. Diabetic retinopathy is a prominent example where systematic screening is essential but not consistently available.

Diabetic Retinopathy is a common and serious complication of diabetes that damages the small blood vessels of the retina, the light-sensitive tissue at the back of the eye. In its early stages, the disease often does not result in noticeable symptoms. Vision loss typically occurs only once it has progressed to more advanced stages, when treatment becomes more complex and less effective. As a result, diabetic retinopathy remains a leading cause of preventable blindness worldwide. Regular screening allows clinicians to detect characteristic retinal changes long before visual impairment sets in, when treatment is still possible.

In high-income countries, telemedical screening programs for diabetic retinopathy image the retina with specialized cameras. These images are assessed by ophthalmologists or trained graders. In recent years, certified AI-based medical devices have been introduced that automate image quality assessment and image-based diagnosis, demonstrating that automated analysis is safe and effective. However, these systems rely on relatively large and costly hardware, limiting their applicability in low-resource settings, particularly in remote or rural areas.

Smartphone-based retinal imaging systems have emerged as a promising portable and comparatively low-cost alternative and are ideally suited for use in low- and middle-income countries. By combining standard smartphones with specialized optical adapters, some available at a low cost, it becomes possible to capture retinal images with a setup that is easy to transport, and capable of producing diagnostically usable image quality. This creates new possibilities for early diagnosis in settings where conventional imaging infrastructure is currently unavailable.

Even when suitable imaging hardware is available, acquiring single high-quality images of peripheral retinal regions, as it is traditionally required for diagnosis, can be challenging – especially when examinations are performed by non-specialists or under time pressure. Capturing multiple images and manually selecting the most suitable ones requires experience and increases examination time. For this reason, ongoing work at Lamarr and the University Hospital in Bonn, together with various partners in low- and middle-income countries (Sankara Eye Foundation India, University of Calabar Teaching Hospital, Nigeria, Organization for Rural Community Development Bangladesh, and University of Cape Coast, Ghana), focuses on the development of a setup that leverages the ability of modern smartphones to record short retinal videos while the examiner adjusts focus and scans different retinal areas.

Rather than placing the responsibility on the operator to identify optimal frames, our video-based strategy aims to reduce the time and level of skill required to perform the examination by using AI-based Ophthalmic Video Analysis to process recorded sequences automatically. Videos provide multiple views of the retina, increasing the likelihood that diagnostically relevant regions are captured with sufficient quality in at least some frames. In addition, temporal information can support algorithms in distinguishing anatomical features from artifacts such as reflections or momentary blur.

By combining smartphone-based retinal imaging with AI-driven video analysis, this approach aims to make diabetic retinopathy screening more scalable and accessible. Further, it might lower the technical barrier for image acquisition, reduce dependence on specialized personnel, and support screening in settings where traditional infrastructure is limited. In this way, early diagnosis may become more feasible for populations that are currently underserved, enabling timely treatment and reducing the risk of avoidable vision loss.

Funding is provided by the Else Kröner-Fresenius-Stiftung (Funding line “Digitale Gesundheit in Entwicklungsländern”) and the German Federal Ministry for Economic Cooperation and Development (Funding line “Klinikpartnerschaften Global”).

When AI Looks over a Surgeon’s Shoulder – AI-based Ophthalmic Video Analysis in Cataract Surgery

We have seen how automated analysis of visual data can support diagnosis. The same principles of AI-based Ophthalmic Video Analysis also apply in the surgical setting. As a second application, we examine how similar ideas can improve cataract surgery, particularly in resource-constrained environments.

Cataract is a clouding of the human eye’s natural lens. It often develops with age but may also occur in connection with other conditions, including diabetes. The disease leads to blurred vision and increased light sensitivity, and in advanced stages, remains a leading cause of blindness worldwide. The only effective treatment is surgical replacement of the clouded lens with an artificial intraocular lens. Cataract surgery is therefore one of the most frequently performed surgical procedures globally.

In high-income countries, cataract surgery is typically performed using the so-called Phacoemulsification approach, in which the opacified lens is emulsified with an ultrasound probe. The combination of specialized and comparatively expensive equipment, extensive training, structured quality control, and post-operative follow-up generally results in excellent surgical outcomes, and complication rates are low. In many low- and middle-income countries, however, large patient populations are typically treated using the more cost-effective small-incision cataract surgery (SICS) technique, in which the lens is extracted in one piece.

Performing such surgeries in a high-throughput setting can pose challenges for training, quality assurance, and continuous improvement. In this context, video recordings represent an untapped potential that ongoing joint work at Lamarr, the University Hospital in Bonn, Sankara Eye Foundation India, and Microsoft Research India, aims to utilize.

Cataract surgeries are performed through an operating microscope, which makes it straightforward to record videos of these procedures. These recordings capture detailed information about surgical workflows, instrument use, and subtle events that may be associated with complications or suboptimal outcomes. Despite this, most surgical videos are reviewed only selectively – for example in case of complications or for teaching purposes. The sheer volume of data (recordings) makes systematic manual analysis impractical, particularly in high-volume environments.

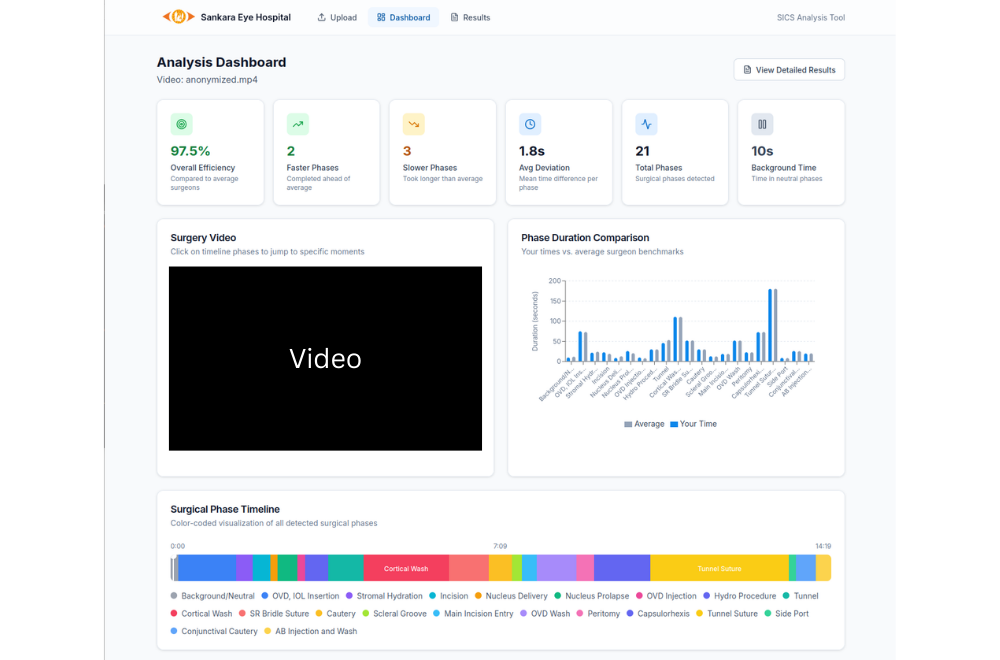

Automated video analysis enables this data to be assessed at scale. By analyzing surgical videos after the procedure, AI systems can support monitoring of surgical performance, identification of deviations from standard workflows, and detection of early indicators of risk. Such post-surgical analysis can support multiple use cases, including training of junior surgeons, objective feedback for experienced practitioners, and quality assurance at the level of departments or entire surgical programs.

A foundational task in the automated analysis of such videos is surgical phase detection, which aims to identify which step of the procedure is currently being performed – for example incision, extraction of the clouded lens, or implantation of the intraocular lens. While previous work focused primarily on the Phacoemulsification approach used predominantly in high-income settings, we recently reported the first phase detection results for videos from SICS. Our findings suggested that this setting is more challenging, due to the larger number of phases and longer overall procedure durations. To advance research in this area, we organized an international challenge that, for the first time, made a large annotated dataset of SICS videos available to the wider scientific community. Contributions from four different continents led to a novel approach leveraging recent advances in foundation models and attention-based temporal modeling, increasing accuracy not only for SICS but also for existing sets of Phacoemulsification datasets.

Phase detection is often studied separately from instrument segmentation, which involves identifying and localizing the surgical tools used during the operation. Our recent work explores the opportunities arising from their natural interdependence. Specific instruments are typically used in specific phases of the surgery, and changes in instrument usage often signal transitions between phases. We demonstrate that AI systems accounting for these tool-phase co-occurrence patterns can achieve more robust and accurate analysis than systems that consider either task in isolation.

Beyond improving algorithmic performance, this joint analysis also supports clinically meaningful interpretation. For example, unexpected instrument usage within a given phase or prolonged duration of certain phases may indicate technical difficulties or increased risk of complications, providing structured feedback that can support both individual surgeons and healthcare organizations.

While our current focus remains on post-operative video analysis, the same underlying technologies open perspectives for real-time assistance in the operating room. In principle, AI systems could monitor surgical progress as it unfolds, provide context-aware guidance, or alert surgeons and supervisors to potential issues before they escalate. Developing such systems will involve not only technical advances but also ethical and regulatory considerations, which are part of our ongoing work.

Implications for Clinical Practice and Healthcare Delivery

In summary, the examples of diabetic retinopathy screening and cataract surgery video analysis illustrate how combining portable and affordable image or video acquisition with machine learning can support patient care, particularly under resource constraints.

In diabetic retinopathy screening, smartphone-based video analysis lowers the barrier to early diagnosis, making screening more accessible to underserved populations.

In cataract surgery, automated post-operative video analysis supports training, supervision, and quality assurance in high-volume clinical settings, contributing to improved patient outcomes and operational efficiency. In both scenarios, AI effectively augments human expertise by providing clinicians with objective, structured, data-driven feedback that inform clinical decisions and treatment.

More broadly, these examples involve medical data whose scale exceeds the scope of manual review but can be made interpretable and actionable through AI-based systems. In low- and middle-income countries, this may help address infrastructure and personnel constraints and extend access to quality care where it is needed most. At the same time, even in high-income healthcare systems, similar tools can enhance efficiency, reduce clinician workload, standardize quality assessment, and support continuous improvement in both diagnostic and surgical practice. In this way, such systems contribute to more equitable, efficient, and precise healthcare delivery, with the potential to improve outcomes for patients around the world.