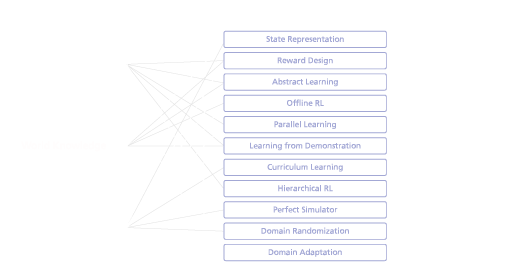

In the previous blog post of our RL for Robotics series we’ve introduced the fundamentals of Guided Reinforcement Learning (RL) – a concept for accelerating the training process and improving the success for real-world robotics. In particular, we presented the Guided RL taxonomy – a modular toolbox for integrating different sources of knowledge into the Reinforcement Learning pipeline (see Fig. 1). In terms of practicality, this toolbox shows how additional knowledge (left column) can be integrated into four specific steps of the RL pipeline (right column) via a series of Guided Reinforcement Learning methods (middle column) . Based on these four steps of the RL pipeline, this blog post now explores how Guided RL could be applied to three very different robotic tasks – dynamic locomotion, robust manipulation, and multi-robot navigation – and thereby provides an intuitive understanding about the method and toolbox usage.

© Fraunhofer IML

Pipeline Step 1: Problem Representation (States, Rewards, Actions)

The first step of applying Guided Reinforcement Learning deals with representing the real-world robotics problem into the formal description underlying RL. To this end, additional knowledge can be integrated into the state representation (Fig. 2a), formulating a task-specific reward function (Fig. 2b), and defining task-specific actions (Fig. 2c) for a desired learning problem. In the following, we will examine each of these three methods based on our exemplary robotic applications.

© Fraunhofer IML

- State representation describes the observable space for the model, where approaches typically aim to transform or extend the state into more instructive representations. For the exemplary application of multi-robot navigation for instance, this could mean integrating additional sensor signals such as laser scanners or depth cameras into the observation space. Although both sensor readings contain high dimensional spaces, laser scanners would likely improve the efficiency of the learning task and hence be preferred in terms of state representation for this task.

- Reward design includes techniques to induce knowledge by means of designing appropriate dense reward functions or automatic learning approaches. For the example of dynamic locomotion, the reward function would consist of multiple optimization criteria. For instance, reward terms could include the following given velocity commands, reducing the overall energy consumption, or encouraging specific locomotion styles: trotting, jumping, running, etc..

- Abstract learning describes the selection of a task-specific action space for a robotics problem that potentially can be hybridized with model-based approaches. For our example of learning robust manipulation, a straightforward approach could be that the neural network directly would control the robots’ joints. However, by integrating existing control knowledge for the low-level operation, the neural network also could operate on the task-level instead, e.g., by directly controlling the end-effector pose, which could increase the data-efficiency of the training process.

Pipeline Step 2: Learning Strategy (Algorithmic Design Decisions)

The second step of the Guided Reinforcement Learning application deals with integrating knowledge in terms of the learning strategy. By training the policies on a recorded dataset (Fig. 3a), applying parallel learning architectures for a given problem (Fig. 3b), or using demonstration examples (Fig. 3c), the learning strategy likely can be accelerated, and the performance can be improved.

© Fraunhofer IML

- Offline RL focuses on using offline data and tries to efficiently learn policies with Reinforcement Learning from recorded training sets. For our application of multi-robot navigation, this could include for instance collecting data from real-world experiments in the desired environment. By explicitly capturing the behavior of both robots and dynamic obstacles under the given geometrical constraints, this data could be used to improve the efficiency and hence accelerate the training of the RL policy.

- Parallel learning deals with the parallelization of the algorithmic components while balancing scalability and robustness of the learning process. For the case of dynamic locomotion for example, this approach could be used to simulate various types of environments to move in at the same time. On the one hand, also the performance of the learning-based controller could be improved while training under various environmental conditions. On the other hand, this approach could accelerate robot training by computing the environments in parallel.

- Learning from demonstration aims to leverage provided examples and integrating them into the learning process to improve both performance and data-efficiency of the training. Applying this method to our application of robust manipulation could mean, for instance, to collect a set of examples on the real robot and then using these examples to guide the training of the learning-based controller, thus improving the data-efficiency in comparison to learning completely from scratch.

Pipeline Step 3: Task Structuring (Breaking Down the Problem)

Step three of the application of Guided Reinforcement Learning incorporates further knowledge in the sense of structuring the learning task, depending on the complexity of the real-world robotics situation. For instance, a large task could be learned progressively with increasing levels of difficulty (Fig. 4a) or being broken down into multiple smaller control tasks (Fig. 4b).

© Fraunhofer IML

- Curriculum learning is based on the idea of structuring a complex task by iteratively solving simpler tasks with increased levels of difficulty. For our case of learning multi-robot navigation, this could mean, for example, starting the Reinforcement Learning training with just one robot navigating in one simple environment. As the training proceeds, multiple robots could be added step by step to the training, as well as other, more challenging types of environments. The decision when to increase the level of difficulty could either be predefined, e.g., based on the time that has passed during training, or it could be automated by linking it to the actual agent’s performance.

- Hierarchical learning exploits the hierarchical structure underlying the learning task to solve different subtasks or deploying high- and low-level policies. For learning dynamic locomotion, e.g., of a legged robot crossing challenging terrains, this method could be used to train different neural networks depending on either the type of environment or the desired locomotion speed. Moving among diverse environments could require different locomotion styles such as stepping, walking, jumping, or climbing. Locomotion at different target speeds could mean training different gaits where each one optimizes the underlying energy efficiency. In both cases, a high-level policy could decide which of the trained networks to select during the application, depending on the commands or environmental conditions.

Pipeline Step 4: Sim-to-Real (Bridging the Gap)

Finally, additional knowledge also could be integrated into the training process to bridge the gap between simulation and reality and hence transferring the trained policies to the real robots. This might include striving for more accurate simulations (Fig. 5a), using domain randomization to train on diverse simulated datasets (Fig. 5b), or domain adaptation approaches to transfer between different domains (Fig. 5c).

© Fraunhofer IML

- Perfect simulators aim at building more realistic simulation environments in terms of accurate robot models, physics computation, and environment representation. For the case of learning dynamic locomotion, additional knowledge about the robot system could be integrated to build a more accurate simulation model. For instance, real-world measurements could be conducted to yield more accurate mass distributions of the robot’s links, actuator responses for high loads, or more realistic ground contact models.

- Domain randomization aims to make the policies more robust by highly randomizing the simulation in terms of either visual or dynamics properties. This method can be applied to train manipulation policies that are robust to various changes in terms of both robot dynamics and environmental perception. By training the policies on varying robot dynamics parameters, such as link masses, joint stiffnesses, or gripper torque limits, the performance of the policy can be improved even for different robot platforms. On the other hand, this approach also could be used to randomize the environment, e.g., by integrating noise to the observation data or randomly changing the positions and parameters of the objects contained in the scene, thus yielding more robust policies for changing environmental conditions.

- Domain adaptation approaches usually request an adaptation module to transfer observations between the simulated and the real world, or vice versa. For the application of multi-robot navigation, this method, for instance, could be used to incorporate more realistic camera perception into the training, e.g., by projecting real-world camera images into the simulations. On the other hand, this approach also could be applied to enable the policy dealing with various environmental properties, such as different terrains or actuator dynamics, to improve the transfer of the trained policies to the real world.

Summary

This blog post discovered how Guided Reinforcement Learning can be applied to three very different robotic tasks – thus potentially accelerating the training process and improving the success for real-world robotics. Over the following months, this blog post series will grow with reviewing applications of Guided RL to real-world robotic challenges – including discussions about the robotic platforms, the adopted methods of the Guided RL taxonomy, and deployment of the policies to the real robots – so stay tuned for more to follow!

For more details on Guided RL, a comprehensive state of the art review, and future challenges and directions, you can check the related journal publication in the IEEE Robotics and Automation Magazine (IEEE-RAM), that is available under the following link (open-access): Guided Reinforcement Learning: A Review and Evaluation for Efficient and Effective Real-World Robotics | IEEE Journals & Magazine | IEEE Xplore