Modern clinical AI faces a fundamental tension: models require large, diverse datasets, yet patient data cannot simply be pooled across institutions. Multi-institutional learning is essential in medical imaging, oncology, radiology, and EHR-based risk prediction, where single-site datasets often lack sufficient diversity or statistical power. Yet regulatory frameworks such as the EU General Data Protection Regulation (GDPR) classify health information as a special category of personal data, imposing strict data minimization and confidentiality requirements.

As a result, centralized model training is frequently infeasible in real-world hospital networks. Federated learning in healthcare aims to reconcile this tension: models are trained locally at each institution, and only model updates are shared rather than sensitive patient data. However, emerging research shows that privacy risks do not disappear simply because raw data remains local. This raises a natural question: if sharing raw data is too revealing, and sharing model parameters can still leak information, how little information is actually necessary for meaningful collaboration?

To understand how privacy can be meaningfully strengthened, we must examine where leakage occurs in conventional federated protocols.

Privacy Limitations of Federated Learning in Healthcare

In its canonical form, federated learning (e.g., FedAvg) aggregates model parameters or gradients across participating institutions. While raw medical images or structured patient records remain on-site, shared updates can encode latent information about the training data.

Multiple attack classes have been demonstrated:

- Membership inference attacks, which attempt to determine whether a specific patient case contributed to model training

- Gradient inversion attacks, capable of reconstructing input features from shared gradients

- Property inference attacks, extracting statistical characteristics of local datasets

In federated learning in healthcare, these vulnerabilities are particularly consequential. MRI scans, pathology slides, and electronic health records contain legally protected and ethically sensitive information. Even indirect leakage through model updates may raise compliance concerns under GDPR and comparable regulatory regimes.

Differential privacy mechanisms can mitigate these risks by injecting noise into updates. However, this introduces a performance trade-off. In diagnostic AI systems, reduced sensitivity or specificity directly affects clinical reliability. Importantly, while differential privacy can be applied to many federated protocols, its practical impact depends on how information is aggregated. In parameter averaging, injected noise propagates directly through high-dimensional updates. In contrast, consensus-based aggregation over discrete labels is inherently more robust to such perturbations, allowing formal guarantees to be added with comparatively smaller empirical performance loss. In other words, privacy guarantees and predictive performance are less at odds under consensus-based collaboration than under parameter averaging. In practice, this proved more consequential than expected. While differentially private parameter averaging often incurs substantial accuracy loss when meaningful noise is added, the consensus mechanism maintained nearly unchanged performance, effectively delivering formal guarantees at a comparatively small additional cost.

An alternative approach, distributed distillation, replaces parameter sharing with soft-label exchange. Participating institutions share posterior probability distributions on a public dataset. While communication costs decrease, soft labels still encode rich decision boundary information and calibrated uncertainty.

This leads to a more fundamental question:

What is the minimal amount of information that institutions must share to collaborate effectively?

Federated Co-Training: Sharing Only Hard Labels

A principled answer to this question is provided by Abourayya et al. (2023) in our work “Little is Enough: Boosting Privacy by Sharing Only Hard Labels in Federated Semi-Supervised Learning“. We introduce Federated Co-Training (FedCT), a protocol that replaces parameter aggregation with consensus-based pseudo-labeling.

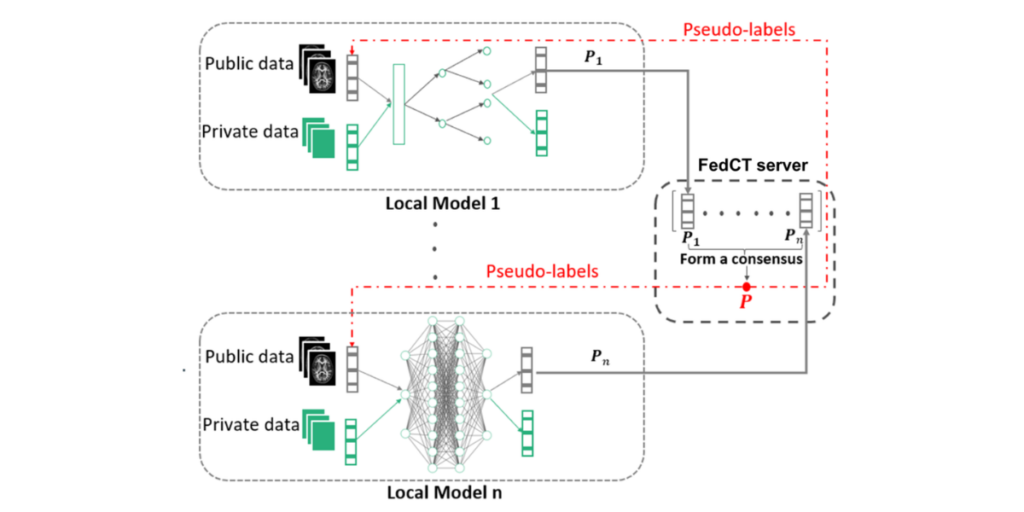

Instead of exchanging gradients or probability distributions, each participating institution:

- Trains a local model on private clinical data.

- Generates hard labels (definitive class assignments) for instances in a shared public dataset.

- Transmits only these discrete labels to a central server.

The server aggregates the predictions typically via majority vote, and redistributes consensus pseudo-labels for subsequent local training.

This workflow is illustrated in Figure 1.

Figure 1: Federated Co-Training Workflow © Abourayya et al. (2023)

Local models trained on private hospital data generate predictions on shared public data. Only hard labels are transmitted to the FedCT server, where consensus pseudo-labels are formed and redistributed.

The conceptual shift is subtle yet fundamental. Soft labels encode calibrated uncertainty and inter-class similarity structure. Hard labels collapse this representation into a single categorical outcome. From an information-theoretic perspective, collapsing probability distributions into definitive class decisions reduces the amount of information transmitted and narrows the potential attack surface.

For federated learning in healthcare, this reduction in shared information directly strengthens privacy resilience.

At first glance, one might expect that sharing less information would inevitably reduce model performance. Surprisingly, we observed the opposite: the consensus-based protocol matches classical federated averaging and often converges faster, suggesting that majority voting can dampen early training noise more effectively than parameter averaging.

Empirical Privacy Evaluation

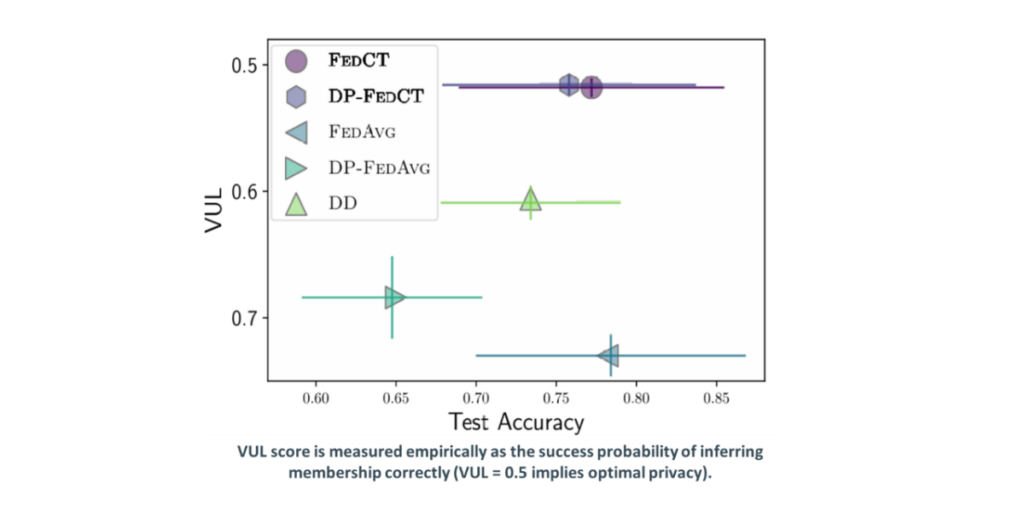

Privacy in the FedCT framework is evaluated using a vulnerability metric (VUL) derived from membership inference attack success rates. A VUL score of 0.5 corresponds to random guessing and thus optimal privacy.

Figure 2 visualizes the privacy–utility trade-off across multiple federated strategies.

The plot compares FedCT, DP-FedCT, FedAvg, DP-FedAvg, and distributed distillation (DD). Lower VUL values indicate stronger privacy.

Empirically, FedCT achieves VUL values close to 0.5, meaning membership inference attacks perform close to random guessing, while maintaining competitive test accuracy. In contrast, classical federated averaging and soft-label approaches exhibit higher privacy vulnerability.

In high-stakes clinical settings, where regulatory scrutiny is substantial, empirical evidence that attacks perform no better than random guessing is not incremental, it is decisive.

Performance on Clinical Imaging Tasks

Diagnostic performance remains central to any clinical AI system. We evaluated FedCT on benchmark datasets as well as medical imaging tasks, including pneumonia classification and MRI-based brain tumor detection.

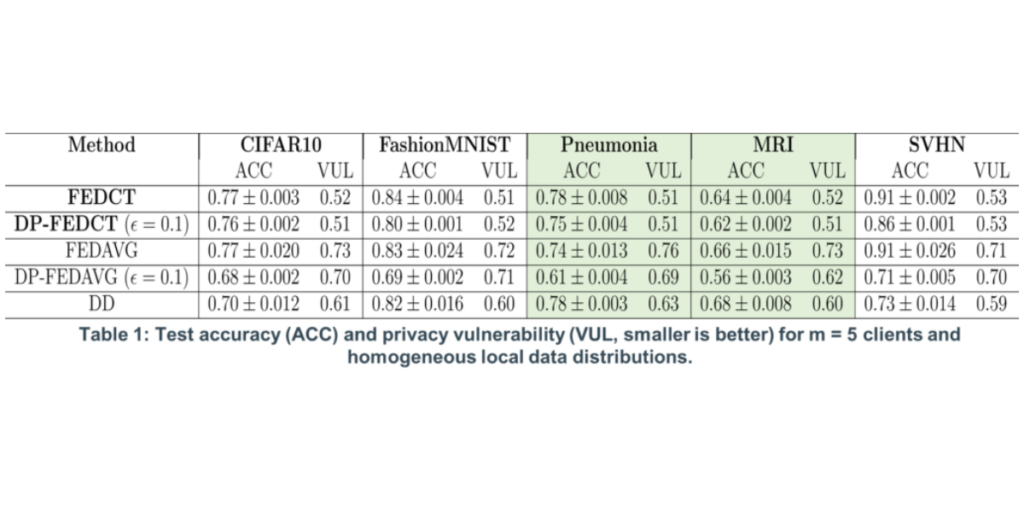

Table 1 reports test accuracy (ACC) and privacy vulnerability (VUL) under homogeneous client distributions (m = 5).

Table 1: Test Accuracy (ACC) and Privacy Vulnerability (VUL) (Smaller VUL indicates stronger privacy protection.). © Abourayya et al. (2023)

Across both pneumonia and MRI tasks, FedCT achieves accuracy comparable to FedAvg while significantly improving privacy metrics.

These findings suggest that in federated learning in healthcare, privacy gains need not come at the expense of clinically relevant predictive performance.

Robustness Under Hospital Data Heterogeneity

Healthcare data is inherently heterogeneous. Differences in imaging devices, acquisition protocols, patient demographics, and institutional practices produce non-iid distributions across sites. Such heterogeneity challenges classical parameter aggregation. FedCT mitigates extreme local biases through consensus aggregation. Majority voting smooths idiosyncratic decision boundaries without requiring alignment of internal model representations. Empirical evaluations demonstrate that under heterogeneous client distributions, FedCT maintains competitive accuracy while preserving its privacy advantage.

Interpretability and Model Flexibility

Interpretability is a core requirement in clinical AI. Regulatory frameworks increasingly demand transparency and auditability of automated decision systems.

Standard federated learning assumes homogeneous neural network architectures to enable parameter averaging. This restricts the use of inherently interpretable models such as, Decision trees, Random forests, and rule ensembles. FedCT decouples collaboration from parameter alignment. Because only predictions are exchanged, participating institutions may employ heterogeneous model classes, including such interpretable models, which are often preferred in structured clinical prediction tasks. For federated learning in healthcare, this architectural flexibility expands applicability in environments requiring explainability.

Communication Efficiency

Large neural architectures can contain millions of parameters. In multi-institutional hospital networks, transmitting such updates repeatedly incurs substantial communication overhead.

FedCT reduces communication volume by up to two orders of magnitude compared to FedAvg, as only discrete class labels are exchanged.

This efficiency enhances scalability in international research consortia and resource-constrained clinical environments, further strengthening the practicality of federated learning in healthcare.

Extension to Clinical Language Models

Healthcare AI increasingly leverages large language models (LLMs) for tasks such as clinical note summarization, phenotyping, and automated coding.

Preliminary investigations suggest that consensus-based collaboration may extend beyond imaging and structured prediction tasks to generative models. The pseudo-labeling mechanism provides a stable collaborative signal without sharing gradient updates derived from sensitive textual data.

This suggests that minimal-information protocols may generalize beyond imaging and structured prediction to language-based clinical AI.

Open Questions and Future Directions

While FedCT significantly strengthens privacy in federated learning in healthcare, several open questions remain:

- How sensitive is performance to the representativeness of the shared public dataset?

- Can synthetic or generative data serve as robust public anchors?

- How can consensus aggregation be strengthened against adversarial label manipulation?

- What are the theoretical privacy bounds under extreme heterogeneity?

Future work at the intersection of federated learning, semi-supervised learning, and privacy-preserving machine learning will further clarify these questions.

Conclusion: Minimal Information as a Clinical Design Principle

Federated learning in healthcare seeks to enable collaboration without centralizing patient data. Yet parameter sharing and soft-label exchange introduce residual privacy risks.

Federated co-training demonstrates that exchanging only hard labels reduces the information available to potential attackers while maintaining competitive diagnostic performance. In that sense, it does not abandon federated learning’s original philosophy; it completes it. By minimizing shared information, the approach aligns with regulatory principles of data minimization and privacy by design.

For Life Sciences & Health research, this paradigm offers:

- Stronger empirical privacy guarantees

- Compatibility with interpretable models

- Robustness under heterogeneous hospital data

- Significant communication efficiency

Improving federated learning in healthcare may not require increasingly complex protective mechanisms layered on top of existing protocols. It may instead require rethinking, at a more fundamental level, what information ever needed to be shared in the first place.